Elixir and Kubernetes: Introducing Aristochat

Distributed programming is the art of solving the same problem that you can solve on a single computer using multiple computers.

– Mikito Takada

Distributed systems trade an increase in complexity for benefits in scalability (if correctly implemented!). Two new and popular pieces of technology are helpful in implementing and operating distributed systems - Elixir and Kubernetes. Elixir is a functional programming language built on the Erlang VM. Like Erlang, Elixir is skilled at running distributed and fault-tolerant systems. Kubernetes is a distributed platform created by Google for deploying, scaling, and running containerized applications. Kubernetes is great at working with microservices – it contains tooling for load balancing, executing rolling deployments, and monitoring. Let’s use them together to deploy small distributed applications running in containers. Meet Aristochat!

Aristochat is a slightly-more-advanced version of the typical Elixir tutorial, a chat app. We’ve used the Phoenix Framework and their channel abstraction over websockets to create a simple chat server. Clients connect and open up a channel. They send messages to the server, and the server handles broadcasting them to all connected clients on that topic. Thanks to the power of Phoenix, we’re able to get this with remarkably few lines of code.

defmodule Aristochat.RoomChannel do

use Phoenix.Channel

def join("rooms:" <> room_name, body, socket) do

{:ok, socket}

end

def handle_in("chat_msg", %{"body" => body}, socket) do

broadcast! socket, "chat_msg", %{

body: body,

username: socket.assigns.username

}

{:noreply, socket}

end

endThe above code is all it takes to handle clients joining and sending/receiving messages.

If you look closely, you can also see the pattern matching capabilities of Elixir.

By defining strings in the function arguments, Elixir will only execute those functions if the inputs match the patterns specified.

If we attempt to execute join without those arguments, our call will error out.

If we wanted to handle a different type of message besides chat_msg, we can define a new handle_in function that takes in our new type and executes in a different manner.

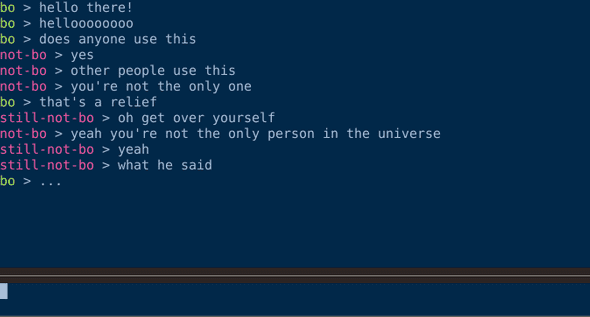

We’ve talked a little about our client, but let’s take a closer look at it. Most applications communicate with Phoenix channels via the Phoenix JavaScript library, which abstracts joining, sending and receiving messages, and keeping the client alive for you. I’ve chosen to forgo this path and instead communicate with the server via a terminal-based UI written in Go. Why take the more complicated path? As part of learning about Elixir and Phoenix, I wanted to dive a little deeper into how things worked and take a closer look at the interfaces of the components I’m using. Implementing my own Phoenix client has been helpful in achieving this goal. Below, I’ve defined the structs used to communicate with the Phoenix server.

// Message defines the structure of the messages sent across the channel

type Message struct {

// Topic defines the Phoenix topic we're communicating on (must be phoenix when sending heartbeats)

Topic string `json:"topic"`

// Event defines the type of event we're sending - one of phx_join, heartbeat, or chat_msg

Event string `json:"event"`

// Payload defines the body of the message coming across

Payload Payload `json:"payload"`

// Ref is a unique string that phoenix uses

Ref string `json:"ref"`

}Phoenix handles each message differently based upon the topic and event values.

If the event is phx_join, the join function will be executed (assuming our topic passes pattern matching).

If the event is chat_msg, the handle_in function broadcasts our message to all clients subscribed to the given topic.

What about the heartbeat messages? If those aren’t sent, the Phoenix channel will close after a certain period of time.

We send a message with a topic of phoenix and an event type of heartbeat every five seconds, which ensures the server doesn’t close our connection.

These are our apps we’ll use. We’ll deploy Aristochat into a Kubernetes cluster and talk to it via our client. In our next installment, we’ll get Kubernetes stood up so that we can run Aristochat!